Integration best practices - thoughts on ESB and SOA

Door Geert Liet / jan 2016 / 1 Min

Door Niek Knuiman / / 2 min

In his book "Clean Architecture", Robert C. Martin talks about the evolution of software engineering by acknowledging three restrictions that were made in the shape of paradigms:

He talks about how those restrictions simplified and structured the way we write code now.

Furthermore, he mentions that no additional fundamental restrictions will be found. Frameworks, libraries, and even languages may create abstractions. But at its core, this is what we work with from now on. While there are far more important subjects with more practical uses in Clean Architecture, I'll dissect this philosophy about the positive effect of restrictions, because... well... it's interesting!

Let's start by quickly defining what a restriction is in this context. A restriction is something that is imposed on the developer that cannot be worked around with a minimal amount of effort. Telling people that your test coverage should at least be 80% is not a restriction. Forcing merge requests to have at least 80% coverage before they can be merged is.

A restriction has a negative ring to it. Something you cannot or may not do. But the book seems to argue that the three restricting paradigms are the core reason that software architecture has evolved to its current, better state.

When I read that, I found that restricting is a common factor in modern programming, from merge requests that are locked by automatic quality gates to the final keyword. It's all to manage this abstract complexity. Adding restrictions creates a base understanding that all developers can work from so that they do not need to consume more complexity than necessary.

Different programming languages use this concept with varying degrees of severity. Object-oriented programming uses polymorphism to avoid improper access while allowing the concept of inheritance. Functional languages take it a step further and often restrict the programmer to write pure code ━ code with no side effects. Both examples show how, with the restrictions in place, the programmer can make correct assumptions without having to spend time combing through every line.

To me, this now makes perfect sense, but I never reflected on this concept and took it for granted. My next question was: What amount of restriction is advantageous, and at what point does it limit innovation or creativity? First, note that the trade-off here is with innovation or creativity and not development speed. *

It appears logical that systems with more inherent complexity benefit more from an increased amount of restrictions. Unfortunately, the higher the abstraction, the harder it is to impose restrictions. Larger systems often span multiple services. If you have read my previous blog about keeping APIs consistent between services using Pact, you know how hard it is to have strongly typed restrictions in place between services. A strongly typed syntax is a given in most languages internally without any extra steps. But this falls away as soon as you cross networks.

Simple systems, on the other hand, are easier to understand. Therefore they have less need of restrictive measures. They do still benefit from some quality gates and all language restrictions.

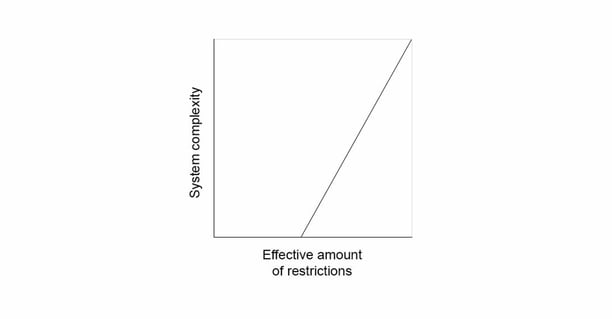

The following diagram shows a schematic relation between complexity and effective restrictions. It has no real data because this is very much dependent on the sector, developers, and the company.

It shows that every project benefits from the initial restrictions but that with more complexity, other restrictions are quickly necessary. An example of these restrictions for more complex systems is how DevOps is enforced or that every commit should have an issue identifier. With more complexity, the new restrictions can become more of an administrative nature. At the same time, the existing restrictions like quality gates should become more restrictive. This should mean that developers can make more inherent assumptions about the system, making it easy to execute localized work in a complex system.

I think that for a developer, the most straightforward takeaway is; Enjoy the effects of any restrictions which are in place instead of being annoyed by them ━ or worse, using workarounds. This is part of the reason that workarounds like reflection are considered (very) bad practice. It breaks, for example, the visibility restriction. I see the private keyword, so my assumption is that it is never accessed outside the class, and it will take hours to find the bug.

*Slow development speed can, however, be a symptom of restricting innovation. With the same amount of knowledge, a language with fundamentally more restrictions can produce a defined result at the same speed. However, if the definition is unclear, and more innovation is needed to work out the best solution (e.g. prototyping), a language without excessive restrictions may produce results quicker.

| Software Architectuur

Door Niek Knuiman / okt 2024

Dan denken we dat dit ook wat voor jou is.